The second formula is appropriate when we are evaluating the impact of one set of predictors above and beyond a second set of predictors (or covariates). The first formula is appropriate when we are evaluating the impact of a set of predictors on an outcome. Where u and v are the numerator and denominator degrees of freedom. Pwr.f2.test(u =, v =, f2 =, sig.level =, power = )

Linear Modelsįor linear models (e.g., multiple regression) use Cohen suggests that r values of 0.1, 0.3, and 0.5 represent small, medium, and large effect sizes respectively. We use the population correlation coefficient as the effect size measure. Where n is the sample size and r is the correlation. Pwr.r.test(n =, r =, sig.level =, power = ) Where k is the number of groups and n is the common sample size in each group.įor a one-way ANOVA effect size is measured by f whereĬohen suggests that f values of 0.1, 0.25, and 0.4 represent small, medium, and large effect sizes respectively. (k =, n =, f =, sig.level =, power = ) You can specify alternative="two.sided", "less", or "greater" to indicate a two-tailed, or one-tailed test. (n1 =, n2=, d =, sig.level =, power = )įor t-tests, the effect size is assessed asĬohen suggests that d values of 0.2, 0.5, and 0.8 represent small, medium, and large effect sizes respectively. Where n is the sample size, d is the effect size, and type indicates a two-sample t-test, one-sample t-test or paired t-test. Type = c("two.sample", "one.sample", "paired"))

Pwr.t.test(n =, d =, sig.level =, power = , (To explore confidence intervals and drawing conclusions from samples try this interactive course on the foundations of inference.) t-testsįor t-tests, use the following functions: Your own subject matter experience should be brought to bear. Cohen's suggestions should only be seen as very rough guidelines. ES formulas and Cohen's suggestions (based on social science research) are provided below. Specifying an effect size can be a daunting task. Therefore, to calculate the significance level, given an effect size, sample size, and power, use the option "sig.level=NULL". functionįor each of these functions, you enter three of the four quantities (effect size, sample size, significance level, power) and the fourth is calculated. Some of the more important functions are listed below. The pwr package develped by Stéphane Champely, impliments power analysis as outlined by Cohen (!988). Given any three, we can determine the fourth. power = 1 - P(Type II error) = probability of finding an effect that is there.significance level = P(Type I error) = probability of finding an effect that is not there.The following four quantities have an intimate relationship: If the probability is unacceptably low, we would be wise to alter or abandon the experiment. Conversely, it allows us to determine the probability of detecting an effect of a given size with a given level of confidence, under sample size constraints. It allows us to determine the sample size required to detect an effect of a given size with a given degree of confidence. I would appreciate if someone can help me out here.Power analysis is an important aspect of experimental design. Does that mean I have covered the minimum requirements? I don't have a textbook or journal article to cite this rule either. I heard there is a rule 'you need to have more cases in each cell than you have DVs'. I was wondering if this is an acceptable statistical result (so that results would be considered for publication), or whether there is a minimum sample size that should be reached. So in this case I won't make a Type II error, as I will reject the null hypothesis. My results show a significant difference despite this low power. By the low power of my sample size I have a very large chance to make a Type II error (to accept the null hypothesis even though it is false). Given that my alpha remains at 0.05 (in Gpower the graphs show that) I have no chance to make a Type I error. In RStudio the results are marked with three asterisk ***. I have checked the significance of my results and found they are strongly significant. However, that aside, my concern is whether there is a minimum sample size that is acceptable. I understand that this is not optimal because then I have uneven samples in groups (i.e. Instead I have 11 samples from 11 random participants.

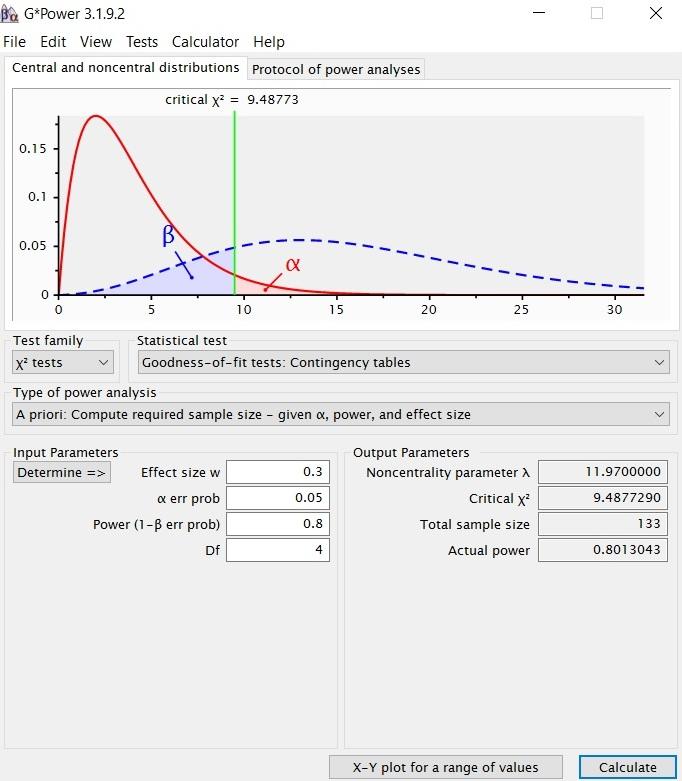

I had used g*power to recommend a sample size, however I have not yet achieved this recommended sample size. I am testing two DV and two IV using a two-way MANOVA.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed